Walkthrough of Massive Models and Rendering Acceleration

The GAMMA research group is investigating techniques for rendering acceleration, with applications including walkthroughs of massive models.

- Logarithmic Perspective Shadow Maps

- Cache-efficient Layouts of Bounding Volume Hierarchies

- Cache-oblivious Mesh Layouts

- Interactive View-dependent Rendering of Massive Models

- Interactive Shadow Generation in Complex Environments

- Interactive View-dependent Rendering with Conservative Occlusion Culling in Complex Environments

- Rendering Acceleration Using Incremental Textured Depth Meshes

- Parallel Occlusion Culling for Interactive Walkthroughs Using Multiple Graphics Processing Units

- Interactive Walkthrough of Complex Environments

- Hierarchical Simplifications for Faster Display of Massive Geometric Environments

- Video-based Rendering Acceleration for Interactive Walkthroughs

- Appearance-preserving Simplification

- Visibility Culling Using Hierarchical Occlusion Maps

- Simplification Using Successive Mappings

- Interactive Display of Large-scale NURBS Models

- Simplification Envelopes

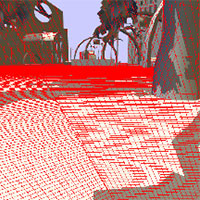

Logarithmic Perspective Shadow Maps

Brandon Lloyd, Naga K. Govindaraju, and Dinesh Manocha

The logarithmic perspective shadow map parameterization can reduce perspective aliasing artifacts for both point and directional light sources. The parameterization is a simple combination of a perspective projection with a logarithmic transformation. that produces tighter bounds on the aliasing error in the view frustum than other parameterizations. We extend three existing shadow map algorithms to use our parameterization. Compared with competing algorithms, logarithmic perspective shadow maps produce significantly less aliasing error. Equivalently, for the same error, logarithmic perspective shadow maps require significantly less storage and bandwidth. With hardware support, logarithmic perspective shadow map would have performance similar to the algorithms upon which they are based.

Cache-efficient Layouts of Bounding Volume Hierarchies

Sung-Eui Yoon and Dinesh Manocha

We present an algorithm to compute cache-efficient layouts of bounding volume hierarchies (BVHs) of polygonal models. We does not make any assumptions about the cache parameters or block sizes of the memory hierarchy. We introduce a new probabilistic model to predict the runtime access patterns of a BVH. Our layout computation algorithm utilizes parent-child and spatial localities between the accessed nodes to reduce both the number of cache misses and the size of working set. Also, our algorithm works well with spatial partitioning hierarchies including kd-trees. We use our algorithm to compute layouts of BVHs and spatial partitioning hierarchies of large models composed of millions of triangles.

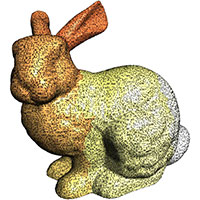

Cache-oblivious Mesh Layouts

Sung-Eui Yoon and Dinesh Manocha

We present a method for computing cache-oblivious layouts of large meshes that improve the performance of interactive visualization and geometric processing algorithms. In our approach we make only general assumptions on the locality of geometric algorithms and make no assumption with regard to the particular data access pattern or cache parameters of the memory hierarchy involved in the computation. Furthermore, our formulation extends directly to computing layouts of multi-resolution and bounding volume hierarchies of large meshes. We develop a simple and practical cache-oblivious metric to estimate cache misses. Computing a coherent mesh layout is reduced to a combinatorial optimization problem. We designed and implemented an out-of-core multilevel minimization algorithm and tested its performance on unstructured meshes composed of tens or hundreds of millions of triangles. Our layouts can significantly reduce the number of cache misses.

Interactive View-dependent Rendering of Massive Models

Sung-Eui Yoon, Brian Salomon, Russell Gayle, and Dinesh Manocha

We present an approach for interactive view-dependent rendering of massive models. Our algorithm combines view-dependent simplification, occlusion culling, and out-of-core rendering. We represent the model as a clustered hierarchy of progressive meshes. We use the cluster hierarchy for coarse-grained selective refinement and progressive meshes for fine-grained local refinement. We present an out-of-core algorithm for computation of a clustered hierarchy of progressive meshes that includes cluster decomposition, hierarchy generation, and simplification. We make use of novel cluster dependencies in the preprocess to generate crack-free, drastic simplifications at runtime. The clusters are used for occlusion culling and out-of-core rendering. We add a frame of latency to the rendering pipeline to fetch newly visible clusters from the disk and avoid stalls. The clustered hierarchy of progressive meshes reduces the refinement cost for view-dependent rendering by more than an order of magnitude as compared to a vertex hierarchy.

Interactive Shadow Generation in Complex Environments

Naga K. Govindaraju, Brandon Lloyd, Sung-Eui Yoon, Avneesh Sud, and Dinesh Manocha

We present an algorithm for interactive generation of hard-edged, umbral shadows in complex environments with a moving light source. Our algorithm is based on a hybrid approach that combines some of the efficiencies of image-precision techniques along with the image quality of object-precision methods. We present interactive algorithms based on levels of detail and visibility culling to compute the potential shadow-casters and shadow-receivers. We further reduce their size based on a novel cross-visibility culling algorithm. Finally, we use a combination of shadow polygons and shadow maps to generate shadows. We also present techniques for level-of-detail-selection that eliminate the artifacts in self-shadows. Our algorithm can generate sharp shadow edges and reduce aliasing.

Interactive View-dependent Rendering with Conservative Occlusion Culling in Complex Environments

Sung-Eui Yoon, Brian Salomon, and Dinesh Manocha

We present an algorithm combining view-dependent rendering and conservative occlusion culling for interactive display of complex environments. A vertex hierarchy of the entire scene is decomposed into a cluster hierarchy through a novel clustering and partitioning algorithm. The cluster hierarchy is then used for view-frustum and occlusion culling. Using hardware accelerated occlusion queries and frame-to-frame coherence, a potentially visible set of clusters is computed. An active vertex front and face list is computed from the visible clusters and rendered using vertex arrays.

Rendering Acceleration Using Incremental Textured Depth Meshes

Andy Wilson and Dinesh Manocha

We present an incremental algorithm to compute image-based simplifications of a large environment. We use an optimization-based approach to generate samples based on scene visibility, and from each viewpoint create textured depth meshes using sampled range panoramas of the environment. The optimization function minimizes artifacts such as skins and cracks in the reconstruction. We also present an encoding scheme for multiple textured depth meshes that exploits spatial coherence among different viewpoints. The resulting simplifications, incremental textured depth meshes, reduce preprocessing, storage, rendering costs and visible artifacts.

Parallel Occlusion Culling for Interactive Walkthroughs Using Multiple Graphics Processing Units

Naga K. Govindaraju, Avneesh Sud, Sung-Eui Yoon, and Dinesh Manocha

We present a parallel occlusion culling algorithm for interactive display of large environments. It uses a cluster of three graphics processing units to compute an occlusion representation, cull away occluded objects and render the visible primitives. Moreover, our parallel architecture reverses the role of two of the graphics processing units between successive frames to lower the communication overhead. We have combined the occlusion culling algorithm with pre-computed levels of detail and use it for interactive display of geometric datasets.

Interactive Walkthrough of Complex Environments

William V. Baxter III, Avneesh Sud, Naga K. Govindaraju, and Dinesh Manocha

GigaWalk is a system for interactive walkthrough of complex, gigabyte-sized environments. It combines occlusion culling and levels-of-detail and uses two graphics pipelines with one or more processors. We use a unified scene graph representation for multiple acceleration techniques, and we present novel algorithms for clustering geometry spatially, computing a scene graph hierarchy, performing conservative occlusion culling, and performing load-balancing between graphics pipelines and processors. GigaWalk has been used to render CAD environments composed of tens of millions of polygons at interactive rates.

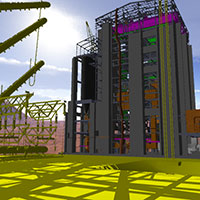

Hierarchical Simplifications for Faster Display of Massive Geometric Environments

Carl Erikson, Dinesh Manocha, and William V. Baxter III

We present an algorithm and a system for accelerated display of large environments. We represent a given geometric dataset using a scene graph and automatically compute levels of detail for each node in the graph. We further augment the levels of detail with hierarchical levels of detail that serve as higher fidelity drastic simplifications of entire branches of the scene graph, where drastic refers to a reduction in polygon count by an order of magnitude or more. Our rendering system leverages the unique properties of our hierarchical level of detail scene graph to allow a user to explicitly choose between a specified image quality or target frame rate. We efficiently render levels of detail and hierarchical levels of detail using display lists.

Video-based Rendering Acceleration for Interactive Walkthroughs

Andy Wilson, Ming C. Lin, and Dinesh Manocha

We present an approach for faster rendering of large synthetic environments using video-based representations. We decompose the large environment into cells and pre-compute video-based impostors using MPEG compression to represent sets of objects that are far from each cell. At runtime, we decode the MPEG streams and use displaying algorithms that provide nearly constant-time random access to any frame.

Appearance-preserving Simplification

Jonathan D. Cohen and Dinesh Manocha

We present an algorithm for appearance-preserving simplification. Not only does it generate a low-polygon-count approximation of a model, but it also preserves the appearance. This is accomplished for a particular display resolution in the sense that we properly sample the surface position, curvature, and color attributes of the input surface. We convert the input surface to a representation that decouples the sampling of these three attributes, storing the colors and normals in texture and normal maps, respectively. Our simplification algorithm employs a new texture deviation metric, which guarantees that these maps shift by no more than a user-specified number of pixels on the screen. The simplification process filters the surface position, while the runtime system filters the colors and normals on a per-pixel basis.

Visibility Culling Using Hierarchical Occlusion Maps

Hansong Zhang, Dinesh Manocha, Thomas Hudson, and Kenneth E. Hoff III

We present hierarchical occlusion maps for visibility culling on complex models with high depth complexity. The culling algorithm uses an object space bounding volume hierarchy and a hierarchy of image space occlusion maps. Occlusion maps represent the aggregate of projections of the occluders onto the image plane. For each frame, the algorithm selects a small set of objects from the model as occluders and renders them to form an initial occlusion map, from which a hierarchy of occlusion maps is built. The occlusion maps are used to cull away a portion of the model not visible from the current viewpoint. The algorithm is applicable to all models and makes no assumptions about the size, shape, or type of occluders. It supports approximate culling in which small holes in or among occluders can be ignored.

Simplification Using Successive Mappings

Jonathan D. Cohen and Dinesh Manocha

We present the use of mapping functions to automatically generate levels of detail with known error bounds for polygonal models. We develop a piecewise linear mapping function for each simplification operation and use this function to measure deviation of the new surface from both the previous level of detail and from the original surface. In addition, we use the mapping function to compute appropriate texture coordinates if the original map has texture coordinates at its vertices. Our overall algorithm uses edge collapse operations. We present rigorous procedures for the generation of local planar projections as well as for the selection of a new vertex position for the edge collapse operation.

Interactive Display of Large-scale NURBS Models

Subodh Kumar and Dinesh Manocha

We present serial and parallel algorithms for interactive rendering of large-scale and complex NURBS models. The algorithms tessellate the NURBS surfaces into triangles and render them using triangle rendering engines. The main characteristics of the algorithms are handling of arbitrary surface topologies, the exploitation of spatial and temporal coherence, optimal polygonization, and back-patch culling. Polygonization anomalies like cracks and angularities are also avoided.

Simplification Envelopes

Jonathan D. Cohen and Dinesh Manocha

Simplification envelopes is a method for automatically generating level-of-detail hierarchies. The user specifies a single error tolerance, the maximum surface deviation of the simplified model from the original model, and a new, simplified model is generated. The method hinges on the generation of two envelope surfaces which surround the original surface. The distance between the envelope surfaces and the original surface is always less than the user-specified tolerance. The output model is generated by making successive modifications to the original model, always making sure that the resulting surface lies between the two envelope surfaces.

Leave a Reply

You must be logged in to post a comment.